Can AI Replace Human Analysts? Beyond Automation, Limits, and Control

Financial crime is becoming increasingly complicated, as it is evolving at a faster pace, becoming more fragmented, making detection far more difficult as activity unfolds. At the same time, compliance teams are drowning in alerts, false positives, and growing expectations around compliance. As pressure grows, AI AML compliance has become central to enterprise compliance operations.

But underneath the hype sits a more difficult question:

Can AI replace humans in AML compliance, or is it quietly redefining how decisions are made?

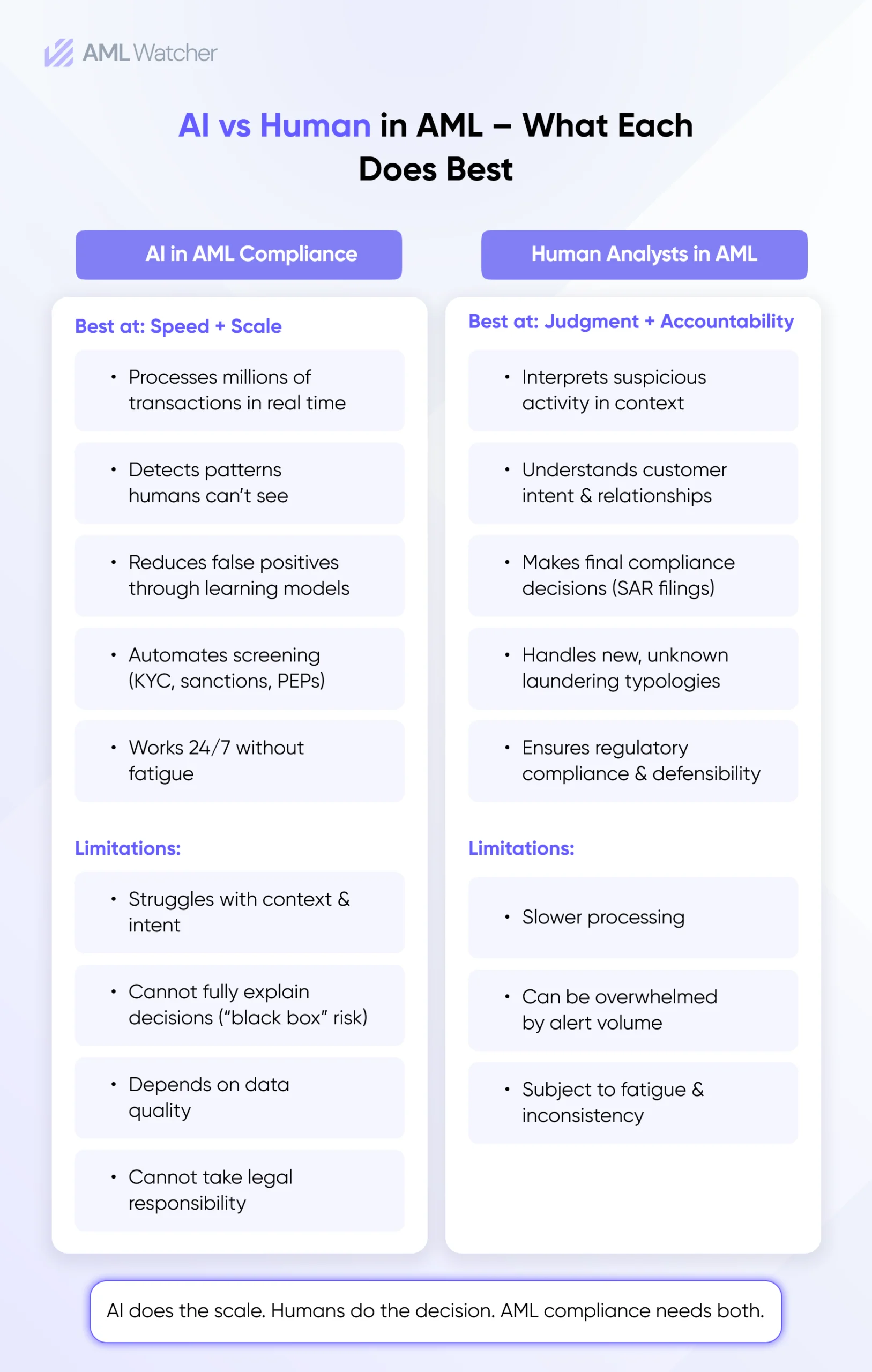

The reality is that the shift is not really about replacing humans but rather about redistributing responsibility. In modern AML compliance, machines handle speed and scale. Meanwhile, humans are being pushed deeper into judgment, governance responsibilities, and regulatory interpretation. The true transformation lies not in automation itself, but in the responsibility for final decisions.

Why AI in AML Compliance is Accelerating across Financial Institutions

Financial institutions are adopting AI for compliance, not solely to modernize systems. The main driver is driven by structural failure in legacy AML systems.

Traditional rules-based monitoring tools were not designed for today’s transactional complexity. They use static thresholds, outdated typologies, and hard rules, resulting in an excessive number of alerts. Compliance teams are confronted with three inescapable challenges as financial activity volumes increase:

- Overload of alerts beyond the capacity of the analyst.

- High false-positive rates consume analyst time while reducing operational efficiency.

- Delayed response to new laundering techniques

This is where Artificial Intelligence in financial compliance comes into play. Machine learning systems now help institutions process millions of transactions in real time, flagging anomalies that break patterns, while continuously refining detection parameters as patterns evolve.

In practice, automated systems or AML systems transform institutions from reactive to behavior-focused monitoring. This transformation will make things more efficient, but it cannot remove the need for analyst review. It alters the point at which the decision is made.

Where AI Performs Better than Humans in AML Workflows

There are definite parts of AML operations where AI and compliance are where the technology outperforms human analysts, primarily in scale, processing speed, and behavioral analysis recognition.

1. Large-scale Pattern Detection

AI models that can spot hard-to-notice correlations across millions of transactions that human teams would struggle to identify manually are being processed manually. This includes behavioral deviations, transaction clustering, and the identification of unusual activity across entities.

2. Real-Time Monitoring and Screening

In modern compliance AI systems, sanctions screening, PEP checks, and adverse media scanning happen continuously. AI compliance systems can analyze global datasets in real time, reducing the time between suspicious activity and detection.

3. Reducing Repetitive Compliance Tasks

AI is highly effective in:

- Initial alert triaging

- Data enrichment for case files

- Name matching and deduplication

- Preliminary risk scoring

This is where automated monitoring compliance becomes useful in day-to-day operations: eliminating repetitive analytics work and letting investigators spend more time investigating rather than sorting data.

However, efficiency does not equal autonomy. Although these systems are useful in many cases, as they still require structured data, how well the system has been trained, along with previously observed behavior, becomes especially important in regulated scenarios.

Where Human Oversight Still Matters in AML Compliance Decision-Making?

While AI has progressed rapidly in AML, there are still areas where experienced investigators play a central role.

1. Contextual Judgment in Investigations

AML investigations often do not proceed in a straight line. A transaction could look suspicious on its own, but not when it’s used in context. Human analysts interpret:

- Customer intent

- Business relationships

- Broader commercial and geographic context

These subtle interpretations of meaning are where AI gets confused, as it lacks any real-world reasoning beyond data patterns.

2. Regulatory Interpretation and Accountability

Regulators like the Financial Crimes Enforcement Network (FinCEN), the European Banking Authority (EBA), the Financial Conduct Authority (FCA), and the Financial Action Task Force (FATF) all highlight one thing: Accountability cannot be handed over to machines.

Institutions may use AI for decision-making, but that does not take away from an institution’s legal responsibility for the result.

3. Emerging Typologies and Adaptive Criminal Behavior

Financial crime is ahead of the curve when it comes to system training cycles. Insufficient data is typically available to monitoring systems to identify patterns with certainty before the new laundering methods, the misuse involving digital assets, and cross-border layering appear.

4. Ethical and High-Risk Decisions

Regulators demand human accountability in the areas of decisions made, like Suspicious Activity Reports (SARs) that involve assessing intent, proportionality, and risk tolerance.

This directly answers the question:

“Can AI replace humans?” in AML

It cannot replace accountability, judgment, or regulatory responsibility.

Why Human-In-The-Loop AI is Emerging as a Regulatory Requirement

The rise of human-in-the-loop AI goes beyond being a structural design preference; it is now becoming a compliance requirement.

Regulators are making it clear that AI systems need to be explainable, auditable, and supervised by humans. Key expectations from the regulators include:

- AI systems used in AML are categorized as high-risk under the EU AI Act, thereby mandating transparency and human oversight.

- FinCEN always emphasizes human validation in AML at the decision points, such as during SAR filing and sanctions escalation.

- The FCA holds the senior management to account for the results of their AI compliance efforts.

- The Monetary Authority of Singapore (MAS) sets standards for explainability in AML systems to ensure comprehensive compliance.

This is a structural need that automated systems can help in the decision-making process; however, humans must be in control of the decision.

In practical terms, “human in the loop” refers to a process in which AI identifies risk signals, and then humans assess, modify, or escalate those findings. Ultimately, the final responsibility remains with a designated compliance officer.

If this structure is not maintained, institutions are continuously at risk of failing to meet the regulatory requirements, failing to pass audits, and being poorly defensible during investigations.

Risks Of Over-Automation in Financial Compliance Systems

While AI in financial compliance can help streamline processes, the potential for over-reliance creates new risks, which regulators are closely watching.

- Black-Box Decision-Making: When AI systems are unable to provide a clear reason for an alert, compliance teams have no way to defend their decisions in the event of an audit or investigation.

- Bias and Model Drift: If a model is not well-trained, it can pick up the bias from the data used in training, resulting in differences in risk scoring for different customer segments.

- Data Quality Dependency: AI can only deliver reliable output if it is built on high-quality data. Incompleteness of KYC data and customer records can result in inaccurate risk outcomes.

- AI Hallucination and False Confidence: Advanced models may generate confident but incorrect conclusions, especially in complex investigative scenarios.

The risks highlight a major principle of AI compliance: automation without governance actually raises risk, rather than lowering it.

The Hybrid Model Shaping Modern AML Compliance

Leading institutions are moving toward a hybrid structure that balances automation with accountability, but how it operates exactly. So basically, in this model, three primary steps are involved, which are:

- First, AI takes charge of detection, screening, and prioritization.

- Next, human experts oversee the investigation and interpretation and then make final decisions.

- Lastly, continuous feedback loops are established to enhance the model’s accuracy over time.

This strategy is not about restricting AI; rather, it focuses on effectively integrating it within a compliance framework.

A practical workflow looks like this:

This structure enables AI AML compliance to improve operational efficiency without weakening the governance.

What this Shift Means for Compliance Teams

Compliance roles are changing from providing manual alerts and conducting routine case reviews to overseeing, analyzing, and governing cases. With AI’s growing role in AML activities, compliance teams are now required to review and confirm AI decisions, evaluate high-risk escalations, and verify that AI-driven processes are compliant with internal guidelines and regulatory requirements. The shift is transforming compliance from an operational to a more strategic discipline, with compliance as a language of risk interpretation, accountability, and audit defensibility.

At the same time, a modern and high-skilled workforce is required within compliance teams as the technology is evolving. Now, the analysts should know how to recognize the constraints of AI models. They have to interpret risk signals produced by an AI system and determine when a human hand is needed. Institutions must also have professionals available who can oversee models’ governance frameworks, record decision-making processes, and provide regulatory justification in the event of an audit or investigation. In practice, AI is not reducing the importance of compliance teams; it is redefining where their expertise creates the most value.

AI is reshaping AML, not replacing it.

The question is no longer “Can AI replace humans?” in AML compliance. The real question is how institutions design systems where AI handles scale, while humans retain accountability.

In AI in AML, automation is not the endpoint; it is the infrastructure. The decision still belongs to humans, and regulators are making sure it stays that way.

For compliance teams, the future is not AI versus humans. It is AI with humans, operating in clearly defined, accountable workflows.

How AML Watcher Enables Balanced AI AML Compliance

Many compliance teams face the same operational challenge: they know they need AI to manage screening and monitoring volume, but lack confidence in automated outputs when reducing manual review, so they end up running both in parallel, which limits the operational value of automation.

AML Watcher is designed around a simple principle: AI should reduce the work that does not require judgment, so human analysts focus on the work that does.

Move Beyond Articles. Activate AML Intelligence.

Switch to AML Watcher today and reduce your current AML cost by 50% - no questions asked.

- Find right product and pricing for your business

- Get your current solution provider audit & minimise your changeover risk

- Gain expert insights with quick response time to your queries