The MLRO's Playbook: Board Reporting, Regulatory Exam Preparation, and Programme Governance

TD Bank. Over $3 billion. An asset cap that froze its US retail growth.

The board was informed. The board approved. And the compliance architecture still failed, not because a transaction slipped through, but because the governance structure could not hold. In October 2024, TD Bank pleaded guilty to conspiring to fail to maintain a BSA-compliant AML programme, agreeing to pay over $3 billion in combined penalties across the DOJ, FinCEN, OCC, and Federal Reserve, the largest BSA enforcement action in US history. Cited specifically for prioritising growth over controls, inadequate board oversight, and a compliance architecture that could not hold against business line pressure, it is the single most complete illustration of AML programme governance failure on record. Every structural deficiency your programme may carry, it is already in the public record.

That case sits alongside an enforcement ledger that showed no sign of softening: forty-two BSA/AML enforcement actions in 2024 alone, with boards directed to enhance AML oversight in approximately half of them. BSA officers cited for lacking unilateral SAR-filing authority. Compliance staff found reporting through business line management rather than through the designated officer. The transaction monitoring problems, the CDD gaps, and the SAR delays were symptoms. The root cause, in case after case, was a governance structure that could not hold.

Regulators have absorbed this lesson and are formalising it into the rulebook. FinCEN's June 2024 Notice of Proposed Rulemaking introduced a mandatory sixth pillar risk assessment as the required foundation of every AML/CFT programme, while simultaneously requiring all covered institutions to establish board oversight and approval for each programme component, including "appropriate and effective oversight measures, such as governance mechanisms, escalation and reporting lines."

Important update as of early 2026: this rule remains proposed, not finalised, and the current regulatory environment signals a preference for risk-based flexibility over prescriptive requirements. The governance argument, however, does not depend on the rule being enacted: the 2025 enforcement record proves it.

This article addresses what that means in operational terms: what the MLRO annual report must contain, how board reporting should be structured, what a regulatory examination expects to see, and where escalation frameworks break down in institutions that otherwise believe their programmes are sound.

What Regulators Actually Examine

The FFIEC BSA/AML Examination Manual has long identified five programme pillars: internal controls, independent testing, a designated compliance officer, training, and customer due diligence. Enforcement actions in 2024 cited deficiencies in all four of the first pillars in a significant proportion of cases, not one or two gaps, but systemic failures across multiple components simultaneously.

The more significant regulatory shift is the treatment of governance itself as an examination variable. Examiners now assess not only whether controls exist, but whether the institutional structure permits those controls to function. Two specific governance failures appeared repeatedly in 2024 orders:

- Authority deficits — BSA officers who lacked unilateral authority to file SARs. In at least one case, a senior manager or committee of business managers retained ultimate filing authority, directly compromising the independence that effective compliance requires.

- Reporting line failures — AML monitoring and compliance staff reporting through business line management rather than directly to the BSA officer. This structural arrangement weakens the designated officer's authority and creates the conditions under which commercial pressure displaces compliance judgment.

Neither failure requires a complex transaction monitoring gap to produce an enforcement action. They are governance defects, and they are now explicitly within scope.

The MLRO Annual Report: A Practitioner Standard

When a regulator opens your MLRO annual report, they are not reading to learn what your compliance team did. They are assessing whether your board understood what your compliance team did and whether that understanding was documented before the examination notification arrived. That distinction determines whether the report passes supervisory scrutiny or becomes the first exhibit in an enforcement discussion.

The MLRO annual report is not an activity log. It is the primary evidence that senior management is exercising meaningful AML oversight. Examiners review it during inspections across every major jurisdiction. It will be read by two audiences, the board and the regulator, and the drafting standard must satisfy both.

Regulators in multiple jurisdictions have issued prescriptive guidance on its required content. The Qatar Financial Centre Regulatory Authority (QFCRA) requires the MLRO to assess "the adequacy and effectiveness of the firm's policies, procedures, systems and controls." The UAE Securities and Commodities Authority and the Malta Financial Services Authority impose comparable requirements. The standard across jurisdictions is substantive assessment, not activity reporting.

A defensible MLRO annual report addresses seven components:

| Component | What It Must Include |

| 1. AML/CFT Risk Profile | Current assessment of inherent risk across customers, products, geographies, and channels. Explicit identification of changes since the prior report. EWRA referenced with material deviations explained. |

| 2. Control Effectiveness | Assessment of whether key controls such as, transaction monitoring, CDD, SAR filing, sanctions screening, are operating as designed. Must include quantitative data: alert volumes, false positive rates, investigation backlogs, SAR quality metrics. Qualitative narrative without data is insufficient under current supervisory expectations. |

| 3. SAR Reporting Statistics | Volume and trend data on internal disclosures and external filings, including identified delays. Regulators assess no-SAR decisions with the same scrutiny applied to filed SARs. Both categories require documentation. |

| 4. Training and Awareness | Completion rates by role, assessment outcomes, and thematic gaps identified through internal reporting or audit findings. |

| 5. Independent Testing Results | Findings from internal audit and any external reviews, with explicit status of prior-period remediation. Open findings carried forward without remediation are a consistent examination finding. |

| 6. Resource Assessment | A frank statement of whether the compliance function has adequate staffing, technology, and budget. MLROs who formally document resource constraints and escalate them are demonstrably better positioned before examiners than those who absorb shortfalls silently. |

| 7. Remediation Tracker | Open findings, accountable owners, target dates, and current status. Structured to allow the board to assess progress without requiring the MLRO to narrate it in full at each meeting. |

The report is addressed to senior management. It will be read by examiners. The drafting standard should reflect both audiences.

Board Reporting: A Three-Tier KPI Framework

Board Risk Committees routinely receive AML reporting that falls into one of two failure modes: granular alert data without interpretive context, or RAG-status dashboards without the quantitative grounding that makes a rating meaningful. Both approaches fail the oversight test that regulators now apply.

An effective board reporting framework communicates programme health through defined KPIs, presented consistently, with a narrative that explains movement and identifies emerging risks. The framework below is designed for quarterly board reporting and is adaptable for semi-annual MLRO reporting in jurisdictions where that cadence is required.

Tier 1: Programme Integrity Metrics

These metrics assess whether the AML programme is functioning as designed.

| Metric | What It Measures | Board Relevance |

| SAR filing timeliness | Percentage of SARs filed within the regulatory deadline | Late filings are the most common single trigger for enforcement action |

| Alert-to-SAR conversion rate | Proportion of transaction monitoring alerts escalating to SAR | Abnormally low rates indicate over-alerting or under-investigation; abnormally high rates indicate under-alerting |

| False positive rate | Percentage of alerts closed as non-suspicious | Reflects the monitoring system calibration and investigator quality |

| Investigation backlog | Open investigations beyond the defined SLA | Backlogs signal resource constraints that produce SAR filing delays |

| CDD completion rate | Customer files meeting current CDD/EDD standards | Incomplete files are a leading indicator of examination findings |

The false positive rate demands particular attention at the board level. Industry data indicates that approximately 90% of AML alerts generated by transaction monitoring systems are false positives, meaning for every 100 alerts, roughly 90 are noise. A board that sees only aggregate alert volumes without false positive context cannot assess whether its investigators have the capacity to identify genuine suspicious activity.

A high false positive rate is not a calibration footnote. It is the metric that tells the board whether the programme is producing risk intelligence or producing analyst fatigue. When investigators are clearing noise for the majority of their working day, the real threats get less time. That is not a technology problem in isolation; it is a programme integrity problem that belongs in front of the board.

Examination risk note: When a regulator reviews an institution's alert disposition data and sees a 90% close rate without documented calibration evidence, that becomes an open question in the examination. Examiners assess whether close rates are defensible. A 90% false positive rate without documented calibration evidence is not a programme strength it is an unresolved question about whether real alerts are being buried.

Tier 2: Risk Exposure Metrics

These metrics communicate changes in the institution's inherent risk landscape. A board that reviews only programme integrity metrics sees whether controls are working — not whether the risk environment those controls operate within has shifted.

| Metric | What It Measures |

| High-risk customer population | Number and percentage of customers rated high risk; period-over-period movement |

| PEP and sanctions exposure | Active PEP relationships and sanctions matches currently under review |

| Geographic risk concentration | Exposure to FATF high-risk and monitored jurisdictions; cross-border flow volumes |

| New product/channel risk | AML risk assessment status for products, services, or customer segments launched in the period |

Tier 3: Governance and Accountability Metrics

These metrics provide evidence that the Three Lines of Defence are operating with meaningful separation, not merely documented as doing so.

| Metric | What It Measures |

| Internal audit findings | Number of AML findings, severity classification, and remediation status |

| Regulatory interaction log | Examinations, information requests, and formal correspondence in the period |

| Training completion | Programme-wide rates; role-specific rates for high-risk functions |

| Policy review status | Whether AML policies have been reviewed and approved within the required cycles |

Each board pack should include a one-page executive narrative covering: what has changed since the prior report, what risks are emerging or receding, and what, if anything, requires board decision or resource allocation. The board's function is oversight, not management. The reporting structure should make that distinction operationally clear.

The Three Lines of Defence: Where Theory Meets Examination

The Three Lines of Defence model is a governance standard, not an organisational formality. Regulators assess whether the three lines are operationally distinct, not whether a diagram in the governance framework document shows them as such.

For the MLRO, operational separation requires:

- First Line — Business units own day-to-day AML control execution. They complete CDD, apply policies, and refer to internal SARs. These obligations must appear in role descriptions and performance frameworks, not only in the compliance policy. The MLRO does not perform first-line functions.

- Second Line — The MLRO function sets policy, monitors control effectiveness, investigates internal referrals, makes external filing decisions, and reports independently to senior management and the board. Two structural requirements are non-negotiable: a reporting line that does not pass through any business head with revenue accountability, and direct, unobstructed access to the board. Both must be documented in the MLRO's terms of appointment.

The 2024 enforcement record confirms that regulators will cite the absence of either as a programme deficiency. Under FATF Recommendation 26, competent authorities are required to assess whether financial institutions maintain adequate AML/CFT controls commensurate with their risk profile. FATF Recommendation 35 establishes that jurisdictions must apply sanctions to civil, administrative, and criminal where programme governance is found deficient. Together, these recommendations form the supervisory basis for the governance-focused enforcement actions that characterised 2024 and 2025.

- Third Line — Internal audit independently assesses whether first-line controls and second-line oversight are functioning. The MLRO should not direct third-line scope but should ensure AML receives adequate coverage in the annual audit plan and that findings are formally escalated to the board.

The governance documentation pack, the document set that a regulator requests on Day 1 of an examination, must evidence each line operating distinctly. This means organisational charts with actual reporting lines, Board Risk Committee terms of reference, documented MLRO authority and escalation protocols, and board-approved AML policies with version history.

Regulatory Exam Preparation: A Structured Evidence Checklist

Exam-readiness is not a pre-notification sprint. Institutions that perform best under examination treat it as a continuous governance standard. The following checklist reflects what examiners consistently request across major jurisdictions, FFIEC BSA/AML, FCA supervisory reviews, and FATF-aligned regimes operating under Recommendations 26 and 35 governance expectations.

Governance and Documentation

- Enterprise-wide risk assessment (EWRA), dated within the past 12 months or updated following any material business change

- AML/CFT policies and procedures, board-approved, with version history and approval dates

- MLRO annual report for the current and prior two periods

- Board and Board Risk Committee minutes evidencing AML discussion, challenge, and approval of key programme decisions

- Three Lines of Defence framework documentation, including role descriptions and escalation procedures

- Training records: completion by role, assessment results, and materials used

Controls Evidence

- Transaction monitoring system documentation: rules in production, threshold rationale, tuning exercise history, and outcomes

- Alert disposition data for the examination period: volumes, close rates, escalation rates, and investigation SLAs. Examiners assess whether close rates are defensible. A 90% false positive rate without documented calibration evidence is not a programme strength; it is an unresolved question about whether real alerts are being buried.

- SAR register: all filings in scope, with dates, narrative summaries, and evidence of timely submission

- No-SAR decision log: documented rationale for referrals closed without external filing

- CDD and Enhanced Due Diligence (EDD) file samples across the institution's risk spectrum — high-risk customers, PEPs, and correspondent relationships

- Sanctions screening configuration and periodic testing evidence

Authority and Independence Evidence

- MLRO appointment documentation, with evidence that the role carries unilateral SAR-filing authority

- Reporting line documentation confirming the MLRO does not report through a business revenue function

- Board escalation mechanism: the documented path by which the MLRO can raise material concerns directly to the Board Risk Committee or Audit Committee without requiring prior approval from the CEO or a business head

- Conflict of interest declarations for the compliance function

- Resource assessments: staffing levels, vacancy history, budget, and technology capacity

Remediation Evidence

- Internal audit findings log with severity classifications, accountable owners, target dates, and current status

- Regulatory correspondence log for the examination period

When an examination notification arrives, the MLRO's immediate task is to confirm that all documentation in these categories is current, internally consistent, and accessible. Gaps identified at that point must be escalated to senior management with a documented rationale for the current state not absorbed or quietly corrected.

Escalation Protocols: The Governance Failure That Precedes Every Other Failure

Enforcement actions trace back to escalation failures more often than to undetected transactions. The pattern is consistent: a referral reaches a relationship manager, stays with an account manager, and is closed with a note. An MLRO identifies a control gap, raises it informally with a business head, receives assurances, and the exchange is never documented.

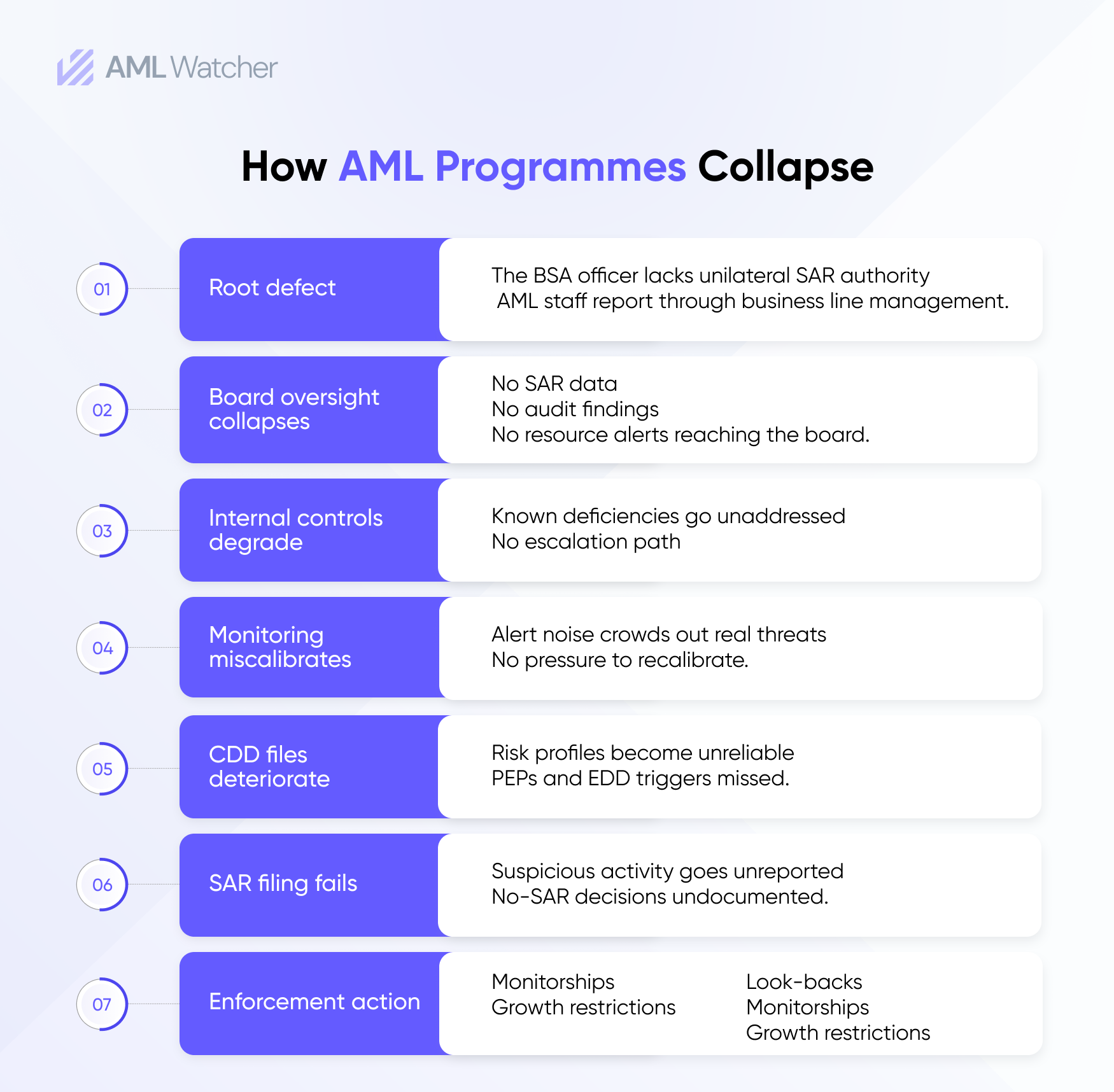

How AML Programmes Collapse: The Escalation Failure Sequence

| Stage | What Happens | Governance Failure |

| 1. Referral | Suspicious activity identified by front-line staff | No documented referral pathway — or one that exists but is not tested |

| 2. Routing | Referral sent to the relationship manager, not MLRO | Reporting line passes through the revenue function |

| 3. Disposition | Account manager closes referral without MLRO review | No mandatory escalation to the second line; no documented rationale |

| 4. Escalation block | MLRO identifies a control gap; raises informally with the business head | No documented escalation; assurances given verbally |

| 5. Examination | Regulator requests escalation records; none exist | Governance deficiency cited; enforcement action follows |

A functioning escalation protocol has three components that must all be present:

- Defined pathways — Every employee must know how to refer a suspicion to the MLRO function. The referral mechanism must be documented, accessible, and periodically tested. Internal SAR forms, dedicated reporting channels, and explicit guidance on what constitutes a referral obligation are minimum requirements.

- Response discipline — The MLRO function must document every referral received, the decision taken, and the rationale, including decisions not to file externally. Regulators assess the quality of no-SAR decisions as closely as filed Suspicious Activity Report (SAR). Contemporaneous documentation is the only evidence of sound judgment.

- Board escalation access — The MLRO must have a defined, documented mechanism to escalate material concerns, programme deficiencies, resource constraints, and business pressure on compliance decisions directly to the Board Risk Committee or Audit Committee, without requiring prior approval from any business head or the CEO. This access must appear in the MLRO's terms of appointment and in the Board Risk Committee's terms of reference.

The 2024–2025 enforcement record is explicit: institutions where the BSA officer lacked this structural independence were cited not because a specific filing was missed, but because the governance architecture could not guarantee that filings would be made when they should be.

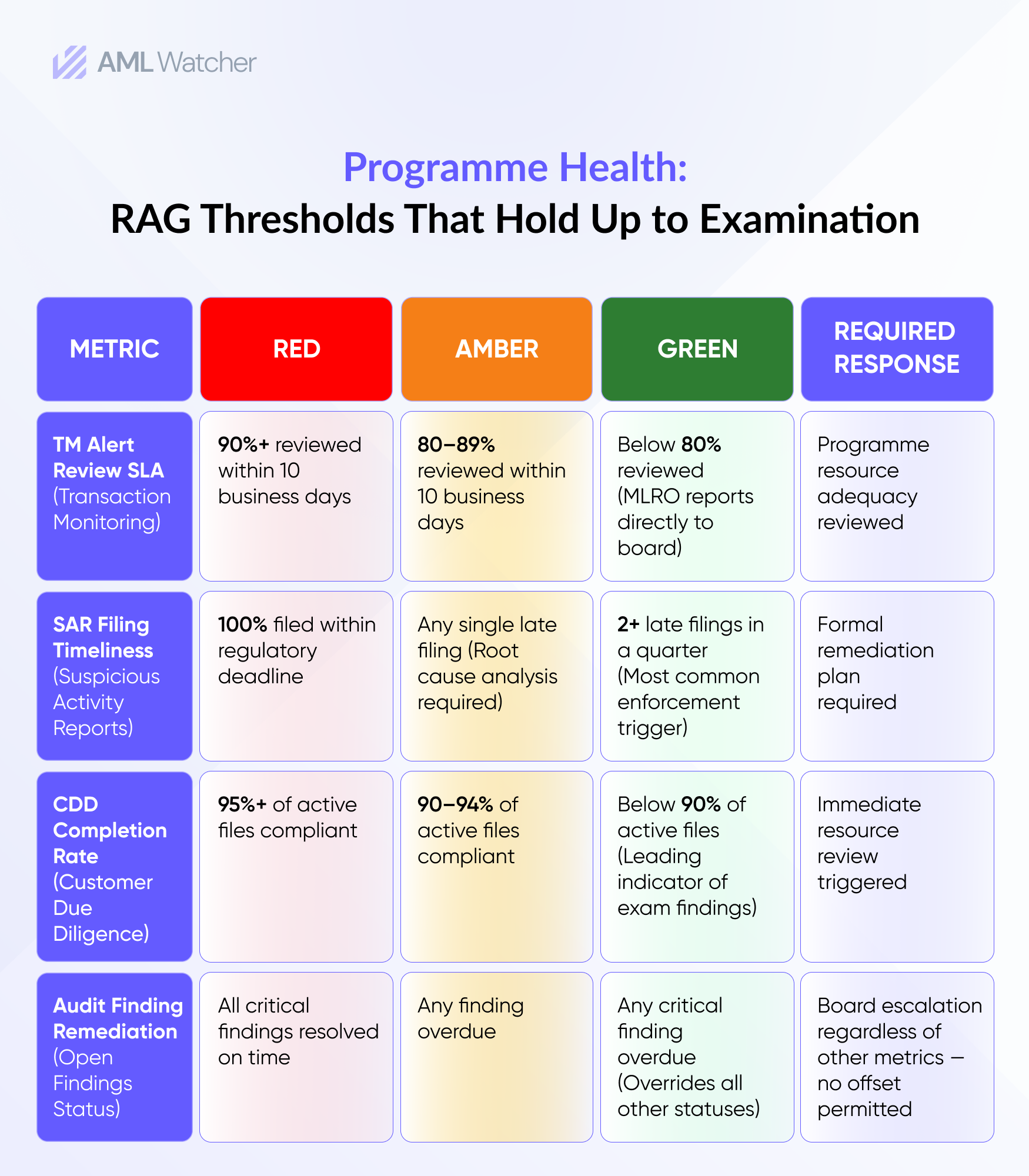

Programme Health Metrics: Replacing the Traffic Light

The RAG dashboard is not inherently flawed. It is almost always applied in ways that obscure rather than communicate. A programme rated Green while carrying a six-week investigation backlog, a 90% false positive rate, and three open audit findings is not a Green programme. It is a programme with an inadequate reporting standard.

Programme health metrics should be grounded in quantitative thresholds, approved by the Board Risk Committee in advance, applied consistently, and treated as non-negotiable triggers for escalation. The following framework replaces qualitative colour ratings with defensible, pre-approved thresholds.

Programme Health: RAG Thresholds That Hold Up to Examination

Thresholds defined in advance and approved by the board create accountability that qualitative assessment cannot. They also create a documented record that the board was informed of programme status on a factual basis, a record that matters when regulators ask whether oversight was genuinely exercised or merely performed.

How AML Watcher Supports MLRO Governance

The question examiners ask is no longer whether you have a transaction monitoring system. It is whether you can demonstrate with contemporaneous, auditable records that it produced defensible outcomes at every point in the investigation cycle.

AML Watcher directly supports that standard across four areas: audit-ready reporting structured around what examiners actually request; TruRisk calibration that reduces false positives by 44% and manual review workload by 70–80%; sanctions screening data updated every 15 minutes across 215+ regimes; and PEP screening records that evidence all four FATF PEP levels assessed, not just the screening result.

The 2024 enforcement record is the benchmark. The next examination will ask whether your oversight was real. The answer needs to be in writing before the notification letter arrives.